The QA Revolution: How AI Is Rewriting the Rules of Software Quality (feat. Tanvi Mittal)

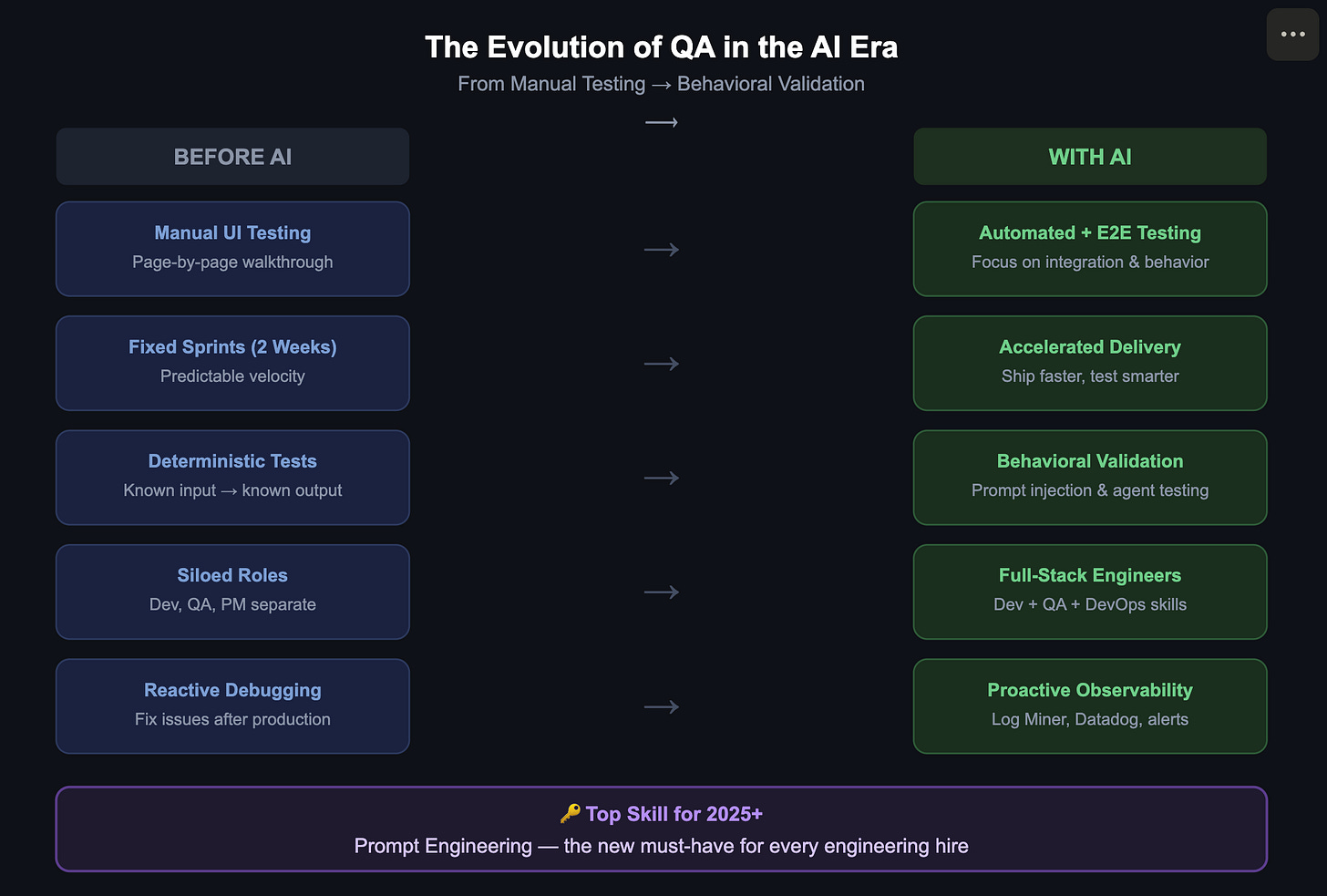

The QA role is evolving — not disappearing — as AI accelerates development, demanding behavioral testing, observability, and prompt engineering skills.

There’s a quiet crisis unfolding inside software engineering teams everywhere. Code is being written faster than ever — in some cases, features that once took weeks now take a single day. But here’s the uncomfortable question nobody is asking loudly enough: who’s checking the work?

Tanvi Mittal (GitHub) has spent over 15 years in software quality — starting as a developer, moving into test automation, and now sitting at the sharp edge of a field being fundamentally reshaped by AI. In a recent conversation on the Snowpal Podcast, she offered a candid, street-level view of what’s actually happening inside engineering teams today.

Podcast

A conversation with Tanvi Mittal, AI Systems & Quality Engineering Expert — on Apple and Spotify.

The Speed Problem No One Is Solving

The shift is striking. AI coding tools have dramatically compressed development timelines, but quality assurance hasn’t kept pace. “The ship to production has increased because now we have a lot of tools which we can leverage to code faster,” Tanvi observed. “But that has not been taken care of so seriously compared to the development part of it.”

In other words: teams are shipping more, but not necessarily testing more. If a sprint that once yielded 10 features now yields 30, the test coverage isn’t automatically tripling with it. That gap — between velocity and validation — is one of the defining challenges of modern software development.

The Tester Is Not Disappearing — But the Job Is Unrecognizable

Ask Tanvi whether the role of the manual tester still exists, and she’ll answer without hesitation: it’s “going super fast.” The person who walks through UI screens page by page, checking boxes manually, is largely a relic. In its place is something harder to define but far more demanding.

The industry is converging on what she calls full-stack quality: developers writing their own functional tests, and QA engineers shifting their focus to end-to-end integration, cross-system behavior, and — increasingly — AI-specific testing. “We definitely need a lot of quality checks around that,” she said. “I don’t see that QA is going anywhere soon.”

What is changing is the nature of the work. QA teams at large enterprises are now expected to be the first responders when something breaks in production. They’re digging through logs, tracing root causes, and managing the complexity of systems where dozens of services interact. That’s a very different job from checking if a button works.

Testing AI Is Not Like Testing Anything Else

Here’s where the conversation gets genuinely new territory. When the thing you’re testing is itself an AI — an agent, a chatbot, a decision-making system — traditional test cases stop making sense.

“The outputs can be different,” Tanvi explained, “but the gist of the work done by that agent should be the same.” You can’t write a test that expects a single, deterministic output. Instead, you’re validating behavior: does the agent do what it’s supposed to do, across a wide range of inputs, without doing what it’s not supposed to do?

That second part is where prompt injection comes in — the AI equivalent of SQL injection. A well-designed financial chatbot, for instance, should calculate loan payments. It should not be coaxed into writing Python code for a user just because they asked nicely. “If it’s giving me that, then it’s unnecessary use of tokens,” Tanvi noted, “and all those kinds of testing and data validation needed to be done.”

This kind of behavioral testing requires a fundamentally different mindset. It’s less about deterministic pass/fail and more about probabilistic trust: does this system behave reliably and safely, at scale, over time?

AI-Generated Code Still Needs Human Eyes

One of the more nuanced points Tanvi made is about the limits of trusting AI-generated tests. When an AI tool auto-generates 100 test cases alongside the code it writes, around 30 of them may be useless — technically invalid in the real production environment, or simply missing the edge cases that matter.

“You are not spending time on writing the code yourself. You are spending time to iterate through the code what is written by the AI and then updating that based on where it is not correct.” The same applies to tests.

This is a subtle but critical insight. The role of the QA engineer isn’t going away — it’s being elevated. The new job is judgment: knowing which tests are real, which edge cases an AI missed, and where the system’s behavior might diverge from expectation in the wild.

Governance, Observability, and the Rogue Agent Problem

As AI agents get more authority — taking actions, making decisions, operating autonomously inside complex systems — the stakes for getting testing wrong go up dramatically. A rogue AI agent might cause significant damage before anyone notices.

Tanvi’s answer to this is observability. Her focus has shifted toward log monitoring and production behavior analysis: catching anomalies early, before they become incidents. She’s even built an open-source tool called Log Miner to support this kind of proactive monitoring.

Tools like Datadog play a central role here — not just as passive log aggregators, but as active early-warning systems. Teams set custom alerts, monitor dashboards in real time, and treat unusual patterns as signals worth investigating before customers notice. “Before the customer points it out, there are a lot of checks and monitoring happening where you can figure out and change before it goes out of your control.”

Interestingly, she also flagged a gap: QA teams are rarely involved early enough in what gets logged and how. If logs are poorly structured, too noisy, or missing key traceable information, debugging production issues becomes exponentially harder. That’s starting to change — QA engineers are increasingly being brought into conversations about logging standards, not just the applications themselves.

FinTech Moves Slower, and For Good Reason

One of the more grounding moments in the conversation was Tanvi’s pushback on the narrative that AI is visibly transforming every software product. In regulated industries like banking and healthcare, that’s simply not what’s happening on the surface.

“FinTech is a sector where AI is not able to show a lot of impact because we have a lot of constraints,” she said. The improvements are real, but they’re mostly invisible to end users: faster deployment pipelines, automated backend processes, modernized APIs. A deployment that once took four to five hours now happens in two clicks. That’s meaningful progress — but you wouldn’t see it from your banking app.

The implication is important: the “AI is changing everything overnight” narrative is largely true for startups and smaller companies, not for enterprises operating in regulated spaces where trust, compliance, and stability rightly slow things down.

The Skill That Matters More Than Any Other

Near the end of their conversation, Tanvi was asked what she’d look for when hiring today that she wouldn’t have looked for two or three years ago. Her answer was unambiguous: prompt engineering.

“How good (they are) at prompt engineering — that is the one thing.” Combined with attitude and genuine dedication to the work, that’s the hiring filter she’d apply now.

It’s a telling signal. The ability to communicate precisely with AI systems — to construct clear, bounded, effective prompts — has become a professional skill, not just a party trick. It’s now table stakes for anyone working in or around software development.

Change Is the Only Constant (And Most People Are Lagging)

Perhaps the most honest thread running through the conversation was about the gap between what people say and what they actually do. Most parents — including Tanvi — are rethinking what success looks like for their kids in an AI-shaped world. Most professionals acknowledge that the skills needed to stay employable are shifting fast.

And yet. The same two-week sprints. The same college applications. The same job searches for traditional roles.

“We are in a world where every day we have to learn new stuff to be accommodating with the technologies shifting,” Tanvi said in her closing. “That’s it. We are learners every day.”

It’s a simple statement, but it cuts to the heart of what’s being asked of everyone in this industry right now — not just QA engineers. The people who will navigate this era well are the ones who treat learning not as a phase, but as a permanent condition of professional life.

Q&A with Tanvi Mittal

Q: Can you tell us a little about your background?

I have 15-plus years of experience in software. I started as a developer, then moved into quality engineering, working closely with large enterprises to build automation frameworks and test React and Angular-based applications. More recently, I’ve been focused on the AI side — how AI and AI agents are affecting software, and how we can carefully test them without leaking bugs into production.

Q: How has testing fundamentally changed with the rise of AI tools?

The biggest shift is that code is being shipped to production much faster because developers now have powerful tools to write code quickly. But the investment in testing hasn’t kept pace with that acceleration. If a lot of code is being generated in one week but we don’t allocate enough capacity for testing, that’s a serious gap. Speed without quality is a risk.

Q: Is the manual tester — someone who walks through UI pages by hand — still a relevant role?

That role is going away very fast. We’re moving toward what I’d call full-stack quality, where testers are also developers and developers are also testers. In smaller teams, that’s already the norm. In large enterprises, the shift is happening now. The QA focus is increasingly on end-to-end integration testing — where many systems interact — rather than checking individual pages manually.

Q: So is QA as a profession disappearing?

Not at all. The need is evolving, not shrinking. We now need people who can intelligently validate the behavior and output of AI agents and LLMs. That requires a very different skill set than traditional testing — but it’s very much in demand. I don’t see QA going anywhere soon.

Q: How do you test code that wasn’t written by a human?

For traditional software, we run it through the same test cases we’d apply to human-written code — plus a quality check on the generated code itself. For AI agents, it’s different. You’re doing behavioral testing: given a wide range of inputs, is the agent producing outputs that are consistent with its intended purpose? The outputs may vary, but the underlying behavior should be reliable.

Q: Can you explain prompt injection and why it matters for QA?

Prompt injection is to AI agents what SQL injection is to databases — it’s a way of manipulating a system into doing something it shouldn’t. For example, imagine a financial chatbot designed only to calculate loan payments. If a user can prompt it into writing Python code or revealing system instructions, that’s a security failure. Part of our job is to test that agents stay within their intended boundaries, no matter how creatively users phrase their requests.

Q: AI tools can auto-generate test cases alongside the code. Does that eliminate the need for human testers?

Not yet. In my experience, if an AI generates 100 test cases, around 30 of them may be impractical or invalid in a real production environment, and it often misses important edge cases. You still need a human to evaluate which tests are meaningful and which aren’t. The time saving is real — but the judgment required to use AI-generated tests responsibly still belongs to a person.

Q: How does QA fit into faster delivery cycles? Are two-week sprints still the norm?

In large enterprises, two-week sprints are still common. QA joins on day one — we sit with developers, understand what’s changing, assess the impact on other systems, and begin defining test cases. By day three we’re refining those cases. Developers handle about 80% of test automation, and our team focuses on the integration and end-to-end layer — making sure all the systems that touch the change are working together correctly.

Q: How are you seeing team structures change?

In startups, the change is dramatic — one person often covers product, development, and QA. In large enterprises, the shift is more gradual but visible. Product owners are managing three products instead of one. QA engineers are being asked to handle DevOps tasks like deployments and root-cause analysis. The days of narrowly defined, single-skill roles are fading. Everyone has to wear multiple hats.

Q: What does good AI governance look like in practice?

Observability is the foundation. You need to monitor production logs continuously so that when something goes wrong with an AI agent, you catch it before customers do. I built an open-source tool called Log Miner for this purpose. Tools like Datadog are central to this — you set up custom alerts, watch dashboards in real time, and treat anomalies as early warning signals rather than waiting for incidents to escalate.

Q: Why does FinTech seem slower to adopt AI visibly?

Because trust is everything in financial services. The customers’ data and money are on the line — that creates a high bar for introducing AI. A lot of progress is happening, but it’s behind the scenes: API modernization, automated deployments, internal tooling. Things that dramatically improve velocity for engineering teams but aren’t visible to the end user. That’s appropriate caution, not stagnation.

Q: Is a college degree in software still worth pursuing?

Honestly, it’s complicated. For fields like medicine or law, formal education is non-negotiable. For software engineering, the diploma is less critical than the skills — and the skills needed are changing faster than most curricula can keep up with. Personally, I wouldn’t push my kids toward software development the way previous generations were pushed. I’d want them to understand AI, not just code. That said, from a cultural standpoint, many families — including mine — haven’t fully made that mental shift yet.

Q: Are laid-off workers turning to entrepreneurship?

Most people are still looking for stable employment first. Business is not easy money — anyone who’s run a company knows that. Most people won’t leave a job until their business is already generating revenue. If someone gets laid off without a business plan, their first instinct is to find another job. Entrepreneurship tends to be the second choice, not the first.

Q: What’s the one skill you’d look for in a new hire today that you wouldn’t have cared about three years ago?

Prompt engineering. How well someone can communicate with AI systems — constructing precise, effective prompts — is now a core professional skill. Beyond that, I look for attitude: commitment, adaptability, and genuine dedication to the work. Those qualities matter more than ever in a world where the tools change every few months.

Q: Any final advice for people navigating this shift?

We are in a world where you have to learn something new every single day to keep up with how fast technology is moving. The people who will thrive aren’t necessarily the most experienced — they’re the most adaptable. Treat learning not as something you did in school, but as a permanent part of how you work.

Tanvi Mittal is an AI systems and quality engineering expert specializing in testing, reliability, and security in LLM-powered applications. This article is based on her appearance on the Snowpal Podcast, hosted by Krish Palaniappan.