From SEO to AEO: How to Optimize Your Website for AI Agents (feat. Frank Vitetta)

The article covers how AI is reshaping SEO, urging marketers to optimize websites for AI agents using markdown, schema markup, and APIs.

A conversation between Krish Palaniappan, CEO of Snowpal, and Frank Vitetta, CEO of Orchid Box, LLM Scout, and CodeScout.

Podcast

Your Website Is Invisible to AI: Here’s How to Fix It — on Apple and Spotify.

The SEO Crisis No One Saw Coming

For decades, the rules of search engine optimization were clear: rank high on Google, drive traffic, convert visitors. That playbook is now being rewritten at speed.

According to Frank, a digital marketing expert and founder of LLM Scout, his agency is seeing average traffic drops of 30–35% year over year across clients — and some are experiencing drops as steep as 80%. The culprit isn’t a Google algorithm update. It’s the rise of AI.

“Google and LLMs — ChatGPT, Claude, and others — they tend now to reply directly to the user,” Frank explains. “So there is no reason for people to go and browse websites. For my clients, that’s a big problem.”

Combined with stricter GDPR enforcement in Europe, which requires explicit user consent before analytics fires, marketers are flying increasingly blind. But the story isn’t as bleak as those numbers suggest.

Is SEO Dead? Not Exactly — But It’s Transforming

Despite falling click-through rates, SEO remains foundational — because LLMs still rely on it.

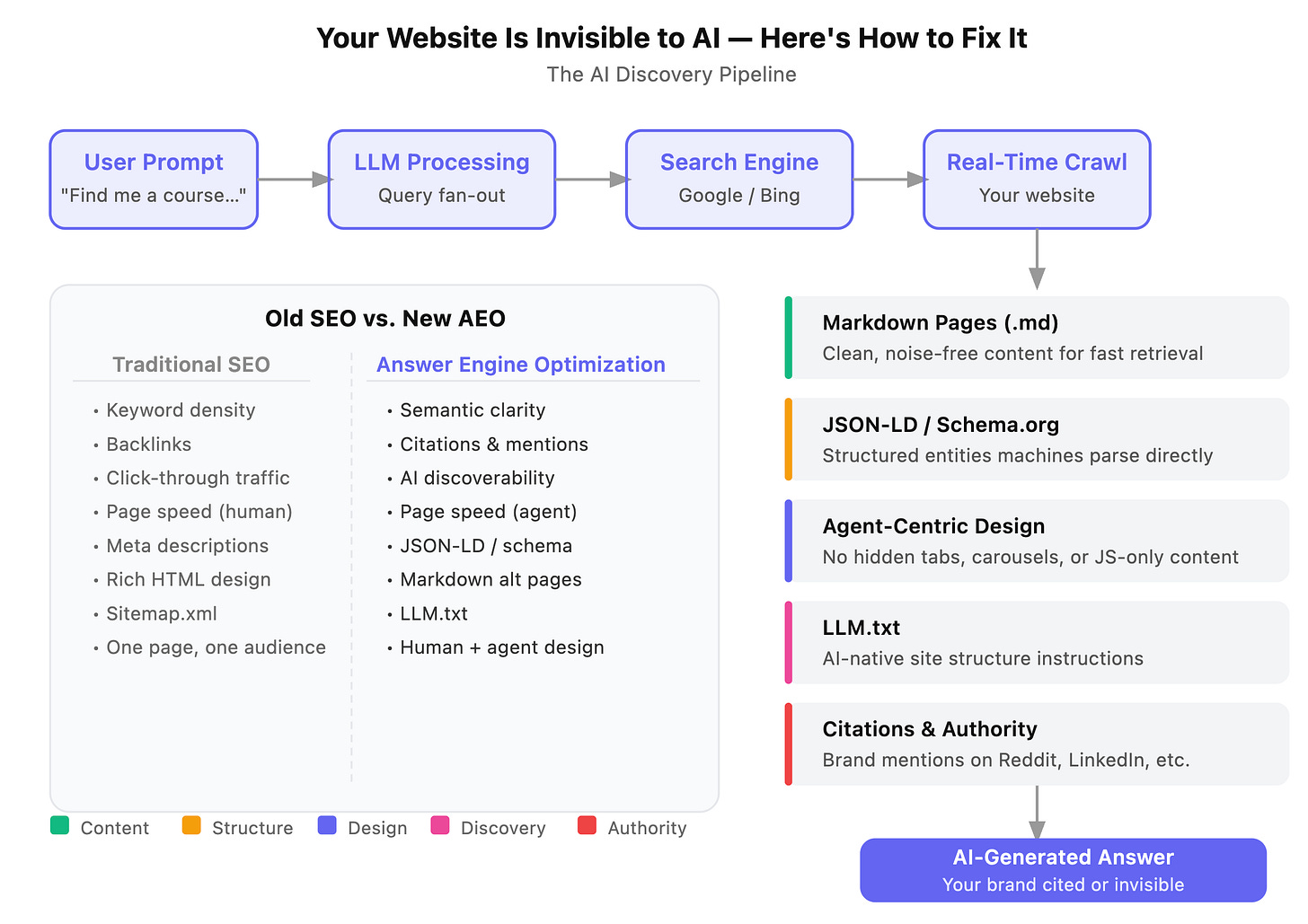

Frank points to a striking statistic: roughly 18% of Google’s traffic today comes from LLM bots. When you ask ChatGPT or Claude a question they can’t answer from training data, they perform live Google searches to gather information. They break your prompt into multiple search queries — a process called “query fan-out” — then crawl the top results in real time to synthesize an answer.

“If you’re still number one in Google, the LLM will recommend you,” Frank says. “The user isn’t clicking the link, but the company is still being discovered.”

The shift, then, isn’t from SEO to something else. It’s from SEO to AEO — Answer Engine Optimization — a discipline focused on making your content readable, trustworthy, and accessible not just to humans, but to AI agents acting on their behalf.

Introducing the Third Web: Markdown Pages for AI Crawlers

One of the most practical strategies Frank recommends is creating a markdown (.md) version of your key web pages alongside the standard HTML version.

Here’s the problem markdown solves: when an LLM crawls your site in real time, it only processes a limited amount of content — approximately the first 100 kilobytes of a page. A typical HTML page is bloated with JavaScript calls, CSS, navigation menus, footers, image tags, third-party scripts, and other “noise” that has nothing to do with the actual content. By the time all that noise is cleared, the meaningful content may never make it into the AI’s context window.

Markdown strips all of that away. It retains only the essential structure — headings (H1, H2, H3), bold text, links, and tables — in a lightweight format that LLMs are deeply familiar with, since most of them were trained on markdown-rich datasets.

How to Implement Markdown Pages

The implementation is simpler than it sounds. For each important page, you:

Create a parallel

.mdfile at a predictable URL (e.g.,/blog/article-name.md)Add a single directive in your HTML

<head>tag:

<link rel="alternative" type="text/markdown" href="/blog/article-name.md">

This tells AI crawlers that a cleaner, machine-readable version of the page exists. The HTML page continues serving human visitors and Google’s traditional crawler without any changes.

Frank’s client at lswen.com already has this in production. You can verify it by taking any blog post URL, removing the trailing slash, and appending .md — a stripped-down, content-only version of the article appears instantly.

An Important Caution on Content Parity

Frank flags a critical risk: the markdown version must be substantively identical to the HTML version. Search engines like Google will screenshot your HTML page and compare it to what they can scrape. If the two versions differ meaningfully, you risk a cloaking penalty — the same kind applied to sites that historically hid white text on white backgrounds to game keyword rankings.

Agent-Centric Design: Rethinking How Websites Are Built

Markdown pages address how AI crawls your content. But there’s a second, equally important challenge: how AI agents interact with your pages when they’re taking actions on a user’s behalf.

When a user instructs an agent to “find me a course provider in this space,” the agent doesn’t read your HTML. It visually “sees” your page — essentially taking a screenshot and interpreting what’s there. This is where most modern websites silently fail.

Frank describes a client whose course catalog page had 80% of its content hidden behind tabs. A human visitor instinctively clicks the tabs. An AI agent sees a screenshot, identifies only what’s visually open, and reports back to the user as if the rest doesn’t exist. Entire product lines become invisible.

“We need to have this in mind when designing,” Frank explains. “If I screenshot this page and send it to someone, would they be able to understand that there is a button here, that they need to press something to watch a video?”

Design Principles for Agent Accessibility

The shift Frank advocates isn’t a complete redesign — it’s a new lens applied to existing design decisions:

Avoid hiding content behind interactive elements. Tabs, carousels, accordions, and modals are human-friendly but agent-hostile. If a piece of content matters, make it visible without requiring a click.

Use high contrast and clear visual hierarchy. Agents interpret pages visually. Background images underneath text, low-contrast buttons, and decorative styling can obscure meaning. Black text on white backgrounds, with clear structural hierarchy, performs best.

Redesign mega menus for dual audiences. Interestingly, the mega menu — once dismissed as dated UX — is making a comeback. Frank notes that well-structured mega menus give agents fast access to a site’s most important content areas without requiring deep navigation. EY Parthenon’s site is cited as an example: services and subsections laid out clearly, accessible in one visual sweep.

Carousels are a liability. A carousel only ever shows one item at a time. An agent seeing a screenshot sees one item. Everything else on those slides, for all practical purposes, does not exist.

JSON-LD and Schema Markup: Speaking the Machine’s Language

Long before AI agents arrived, SEOs were enriching web pages with structured data using the schema.org vocabulary and JSON-LD (JavaScript Object Notation for Linked Data). Now, that practice is more valuable than ever.

Schema markup allows you to define entities — companies, products, events, people, reviews, courses, FAQs — in a standardized format that machines parse directly, without hunting through prose for the information. Instead of an AI agent trying to find your phone number or office address buried somewhere in paragraph text, you declare it explicitly:

{

"@context": "https://schema.org",

"@type": "Organization",

"name": "Your Company",

"telephone": "+1-800-000-0000",

"address": { ... }

}

Frank explains that a modern page might carry multiple overlapping schema types: an event, a product listing, an FAQ section, a course, and company information — all described in structured JSON alongside the visual HTML, all invisible to the reader, all immediately legible to an AI.

Google Search Console now surfaces errors in your rich metadata — missing required fields, type mismatches, formatting issues — making it easier to audit and maintain this layer of your site.

LLM.txt: The AI-Native Sitemap

Traditional XML sitemaps tell crawlers what pages exist and when they were last updated. LLM.txt — a newer convention Frank describes — takes a different approach. Rather than listing URLs, it explains how a website is structured in plain language.

An LLM.txt file might say: “This is a B2B consulting firm. Service pages live under /services. New blog content appears at /blog/[slug]. All product pages follow /products/[category]/[product-name].”

It’s less a directory and more a set of orientation instructions — the kind you might give a new employee on their first day.

The adoption of LLM.txt is still uneven. Anthropic has pushed for it; OpenAI has not committed. Frank acknowledges that in practice, most LLMs appear to rely primarily on what’s on the page itself rather than reading either sitemaps or LLM.txt. But the emerging consensus among AEO practitioners is to implement it anyway — the cost is minimal and the potential upside is real.

Citations: The New Backlinks

In traditional SEO, authority was built through backlinks — other websites linking to yours. In the AI-discovery era, the equivalent is citations: your brand name appearing on other credible platforms, even without a link.

LLMs are trained on massive corpora of online text, and sites with high human-generated authority — Reddit, LinkedIn, trusted review platforms — carry disproportionate weight.

“There was a rush on getting your name on Reddit,” Frank recalls. “People found that LLMs loved Reddit and LinkedIn because they have strong spam policies and self-moderating communities. It was seen as human opinion.”

The predictable result followed: marketers flooded those platforms with AI-generated content, platforms adapted their moderation, and the SEO lift faded. But the underlying dynamic remains: authentic presence on authoritative third-party platforms signals trustworthiness to AI systems evaluating which sources to cite.

The lesson isn’t to game Reddit. It’s to build genuine presence where humans actually discuss your industry — because that’s still where AI goes to learn what’s credible.

The Bigger Shift: From Websites to APIs and MCPs

Beneath all the tactical optimizations lies a more fundamental transformation. As Frank and Krish explore in their conversation, the future of software distribution may not be websites or apps at all — it may be APIs and Model Context Protocols (MCPs).

Platforms like Claude’s Cowork already demonstrate the pattern: rather than switching between a dozen separate applications, users interact with one AI interface that connects to all their tools via connectors. Salesforce, HubSpot, Slack — their data and functionality become accessible through a single, personalized layer.

In this world, having a beautiful website matters less than having a well-documented, accessible API. The agent doesn’t visit your homepage. It calls your endpoint.

Frank’s own roadmap reflects this: “My next step is to create an MCP server so that becomes accessible to other tools. You tell the other tools: we exist, this is how you authenticate, this is what you can do. People use our services using other services seamlessly — without even knowing they’re using us.”

Krish echoes this at Snowpal: building an MCP server so AI agents across industries can consume their APIs generically, without needing to know the specific endpoints, with industry-specific sample agents demonstrating the integration model.

The Economic Undercurrent: What AI Is Doing to Jobs

No discussion of AI optimization is complete without confronting its broader consequences. Frank speaks plainly about the UK’s youth unemployment — the highest rate for under-25s on record — and links it directly to AI displacing entry-level roles.

“Junior engineers, junior lawyers — that first job, second job — I think those have completely been replaced by AI,” he says. “The problem is those people are not going to climb the ladder, which means eventually there will be no mid-level, no senior. There will be a gap.”

Both Frank and Krish describe an irony shared by many in technology: the tools that increase individual productivity also compress the on-ramps through which expertise is built. Senior developers still manage and review. But without juniors learning by doing, who becomes senior?

Frank’s advice, when pressed, circles back to something almost old-fashioned: find what you genuinely love and pursue it with adaptability as your core skill. “If you have a passion for something, you will make it happen. It won’t become a job you do for money — it becomes your identity.”

How Few People Are Actually Building With AI

Perhaps the most grounding data point in the conversation comes from a graphic Krish shares — sourced, Frank believes, from Diary of a CEO — breaking down global AI adoption:

84% of the world population has never used AI

16% use a free AI chatbot

0.3% pay for a subscription

0.04% are actively building with AI

At 8.1 billion people, that 0.04% represents roughly 3.2 million builders. For anyone steeped in the AI conversation — reading newsletters, attending podcasts, refreshing LinkedIn — that number is a useful corrective. The urgency feels total because of algorithmic echo chambers. The reality is that the overwhelming majority of the world hasn’t yet clicked “sign up.”

“I’ve been living in AI anxiety,” Frank admits. “As soon as I go to LinkedIn, everything is about AI. I’m constantly bombarded. But then that graph came along and I thought — I need to breathe.”

The Mechanics of AI Crawling and Query Fan-Out

When a user submits a prompt to an LLM like ChatGPT or Claude, the model doesn’t perform a single search — it decomposes the query into multiple targeted sub-queries, a process called query fan-out. Each sub-query hits a search engine independently, returning a set of ranked URLs. The agent then crawls those pages in real time, parsing the raw HTML to extract relevant content. Because most LLMs cap their page ingestion at roughly the first 100 kilobytes, any content buried beneath heavy JavaScript bundles, third-party script calls, and navigation boilerplate may never enter the model’s context window at all. This is why rendering order in the DOM matters: content that appears early in the HTML source has a structurally higher probability of being ingested than content loaded dynamically via JavaScript after the initial parse.

Structured Data and the JSON-LD Signal Layer

Beneath every well-optimized page lies a machine-readable signal layer built on JSON-LD and the schema.org vocabulary. Unlike prose content, which requires natural language processing to extract entities and relationships, JSON-LD declares them explicitly — a product’s price, an organization’s phone number, an event’s start time — in a standardized format that search engines and AI crawlers can parse deterministically. Modern pages often carry multiple overlapping schema types simultaneously: an Organization block in the site header, a Course or Product block in the body, an FAQPage block in the footer. Each additional schema type expands the surface area of structured facts an AI can confidently extract without inference, reducing hallucination risk and increasing the likelihood that your entity is accurately represented when an LLM synthesizes an answer citing your content.

Key Takeaways for Marketers and Builders

If you’re managing a website or building a digital product in 2025, here are the actionable priorities that emerge from this conversation:

Audit your pages for agent accessibility. Take a screenshot of each important page and ask: could an AI agent understand what’s on this page and what actions are available? If the answer involves clicking tabs or swiping carousels, you have work to do.

Add markdown versions of your highest-value content pages. Use the <link rel="alternative" type="text/markdown"> directive to point crawlers to a clean, noise-free version. Keep the content identical to the HTML version.

Implement or expand JSON-LD schema markup. Every entity type your pages represent — company, product, event, FAQ, course, review — should have corresponding structured data. Audit using Google Search Console’s rich results report.

Write an LLM.txt file. Explain your site’s structure in plain language. It costs almost nothing and may provide meaningful upside as LLM adoption of the standard grows.

Build for citations, not just backlinks. Authentic presence on credible third-party platforms still matters. Focus on genuine value — useful content, honest engagement — rather than volume plays that platforms will quickly detect and discount.

Start thinking in APIs. If you’re building anything new, design it to be consumed by agents, not just browsers. An MCP server or well-documented REST API may ultimately drive more distribution than a polished homepage.

FAQ: Optimizing Your Website for AI Agents

Q: Is SEO dead now that AI answers questions directly?

No — but it’s transforming. LLMs still rely on search engines to find information. Roughly 18% of Google’s traffic today comes from AI bots performing real-time searches on behalf of users. If you rank well on Google, AI is still likely to discover and cite you. The difference is that users no longer click through to your site — the AI summarizes the answer for them. SEO remains the foundation; Answer Engine Optimization (AEO) is the layer you now need to build on top of it.

Q: What is Answer Engine Optimization (AEO)?

AEO is the practice of optimizing your website so that AI systems — ChatGPT, Claude, Gemini, and the agents they power — can find, understand, and accurately represent your content. Where traditional SEO focused on ranking signals for human searchers, AEO focuses on machine readability, structured data, agent accessibility, and authoritative citations across the web.

Q: How much traffic are websites actually losing to AI?

On average, sites are seeing traffic drops of 30–35% year over year. Some are experiencing drops as high as 80%, particularly in Europe where GDPR consent requirements have further reduced trackable traffic. The cause isn’t purely AI — privacy regulations are compounding the problem — but AI-generated answers replacing click-throughs is the dominant factor.

Q: What is a markdown page and why do I need one?

A markdown (.md) page is a stripped-down, plain-text version of your web page that contains only the essential content — headings, body text, links, and tables — with none of the HTML noise (JavaScript, CSS, navigation menus, image tags, footers). LLMs were largely trained on markdown-formatted data, so they parse it faster and more accurately. Creating a markdown version of your key pages gives AI crawlers a clean, high-signal file to ingest instead of fighting through a bloated HTML document.

Q: Will having a markdown page hurt my Google rankings?

Not if the content is identical to your HTML page. The critical rule is content parity: the markdown version must reflect the same information as the HTML version. If the two differ meaningfully, search engines may flag it as cloaking — a tactic historically used to show different content to crawlers than to users — and penalize your site. Keep them in sync and you’re safe.

Q: How do I tell AI crawlers that a markdown version of my page exists?

Add a single line to your HTML <head>:

<link rel="alternative" type="text/markdown" href="/your-page.md">

This directive signals to AI crawlers that a machine-readable alternative is available. Your HTML page continues to serve human visitors and traditional search bots without any changes.

Q: How much of my page does an AI actually read?

Most LLMs cap page ingestion at around the first 100 kilobytes of content. On a typical HTML page loaded with scripts, stylesheets, and third-party calls, the actual article content can sit well past that cutoff. A markdown page resolves this by putting only the content — nothing else — in a file that’s almost always well under that limit.

Q: What is agent-centric design?

Agent-centric design means building your website so that an AI agent — which perceives pages visually, like a screenshot — can understand what’s on the page and what actions are available, without needing to click, swipe, or hover. It’s the AI equivalent of accessibility design for humans with disabilities.

Q: What website elements are most dangerous for AI agents?

The biggest offenders are: tabbed content (agents only see what’s open), carousels (agents see one slide, everything else is invisible), content loaded by JavaScript after page render (agents may not trigger it), and buttons or CTAs that rely on animation or hover states to be understood. If a screenshot of your page wouldn’t make the content and actions obvious to a stranger, it won’t be obvious to an agent either.

Q: Do I need to completely redesign my website?

Not necessarily. The goal is to apply a new lens to existing design decisions — asking “would an agent understand this from a screenshot?” at each step. In many cases, the changes are incremental: making tabs visible by default, ensuring buttons are clearly labeled, avoiding background images underneath critical text. A full redesign is only warranted if the site’s structure is fundamentally agent-hostile.

Q: What is JSON-LD and why does it matter for AI?

JSON-LD (JavaScript Object Notation for Linked Data) is a standardized format for declaring structured facts about a page — your company name, phone number, product price, event date, FAQ answers — in a way machines can parse directly without inference. Rather than an AI trying to extract your phone number from somewhere in paragraph text, you declare it explicitly in a JSON block. This reduces hallucination risk and ensures AI accurately represents your entity.

Q: Which schema types should I prioritize?

Start with the types that match your page content: Organization for company pages, Product for product pages, Course for educational content, Event for events, FAQPage for FAQ sections, and Review or AggregateRating for review content. A single page can carry multiple schema types simultaneously. Use Google Search Console’s rich results report to identify errors and missing required fields.

Q: What is LLM.txt?

LLM.txt is a plain-language file you place at the root of your site (e.g., yoursite.com/llm.txt) that explains how your website is structured — not by listing every URL, but by describing where different types of content live. Think of it as orientation instructions for an AI: “Service pages are under /services. Blog posts follow /blog/[slug]. Product pages are at /products/[category]/[name].”

Q: Is LLM.txt widely supported yet?

Not universally. Anthropic has pushed for its adoption; OpenAI has not committed. In practice, most LLMs currently appear to rely primarily on on-page content rather than reading LLM.txt. However, implementation costs almost nothing, and adoption is expected to grow. It’s worth adding now.

Q: Should I still maintain my XML sitemap?

Yes. XML sitemaps remain important for traditional search engine crawlers and indirectly benefit AI discovery through Google rankings. LLM.txt is a complement, not a replacement — the two serve different audiences and purposes.

Q: What replaces backlinks in the AI era?

Citations — your brand name appearing on credible third-party platforms, with or without a link. LLMs are trained on vast corpora that weight authoritative sources heavily. If your company or product is mentioned genuinely and frequently on trusted platforms (industry publications, community forums, professional networks), AI systems are more likely to surface and recommend you.

Q: Is it worth trying to get mentions on Reddit or LinkedIn?

Authentic mentions on these platforms still carry value — both rank well on Google and feed into LLM training data. However, both platforms actively moderate AI-generated spam, and LLMs themselves are increasingly able to detect low-quality, synthetic content. The strategy that works is genuine participation: useful content, honest answers, real engagement. Volume plays and AI-written posts are quickly discounted.

Q: Do websites even matter if the future is AI agents?

In the short to medium term, yes — websites remain the primary surface AI agents crawl for information. But the longer-term trajectory points toward APIs and Model Context Protocols (MCPs) as the primary distribution layer. If your product or service can be consumed directly by an agent via API, you bypass the website layer entirely. Building both — an optimized web presence and an accessible API — is the safest path for the next few years.

Q: What is an MCP and why should I care?

A Model Context Protocol (MCP) is a standardized way for AI agents to authenticate with and call external services. Rather than an agent browsing your website to find information, it calls your MCP endpoint directly: “What services do you offer? What’s the pricing? Book this.” Companies that expose MCP servers become natively accessible to any AI platform that supports the protocol — without the user ever visiting a website.

Q: How urgent is all of this?

More urgent than most businesses realize. Traffic declines are already happening — 30–80% drops are live, not theoretical. At the same time, only 0.04% of the world’s population is actively building with AI right now. Early movers who optimize for AI discoverability have a significant window before the rest of the market catches up. The companies that act in the next 12–18 months will be the ones AI recommends by default.

Frank Vitetta, is the founder and CEO of Orchid Box, LLM Scout, and CodeScout. LLM Scout monitors how brands are cited and represented across major AI platforms. Krish Palaniappan is the CEO of Snowpal, an API platform helping businesses build software faster.