From Electricity to Intelligence: Mapping the AI Five-Layer Ecosystem (context: Jensen Huang's Blog)

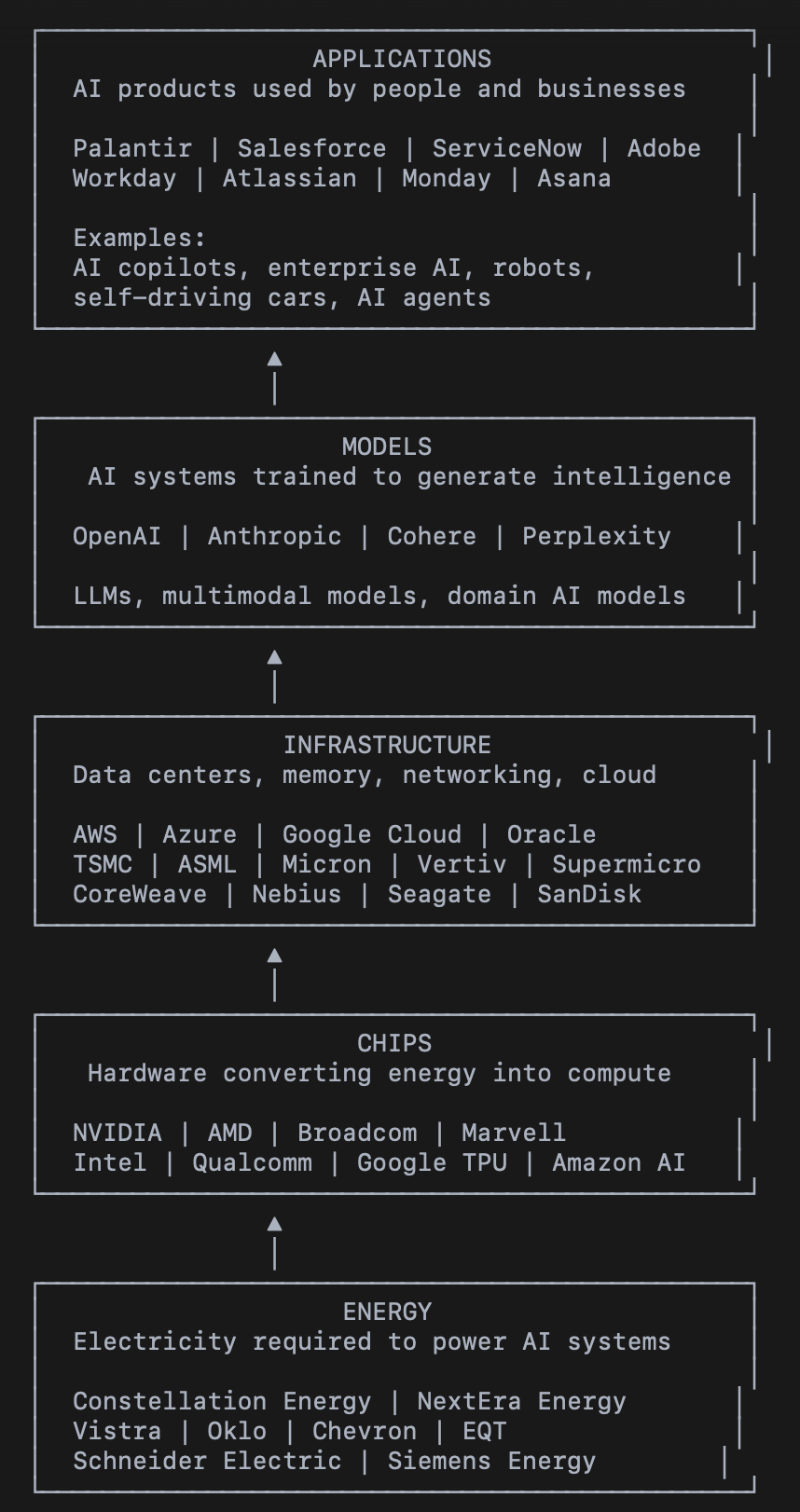

AI operates as a five-layer stack: energy powers chips, chips enable infrastructure, infrastructure trains models, and models drive applications used by businesses, software platforms, and machines.

Artificial intelligence is often discussed through visible products such as chatbots, copilots, or autonomous systems. However, the AI economy is built on a deeper industrial stack. NVIDIA CEO Jensen Huang described this structure as “AI’s Five-Layer Cake,” where multiple industries combine to power modern AI systems.

The five layers are:

Energy → Chips → Infrastructure → Models → Applications

Each layer depends on the one below it. Electricity powers compute hardware, hardware runs in data centers, data centers train models, and models power applications used by businesses and consumers.

Podcast

The AI Five-Layer Stack: Understanding the Full AI Ecosystem — on Apple and Spotify.

Layer 1: Energy – The Foundation of AI

Energy is the base of the AI stack because every AI computation ultimately consumes electricity. Training large language models and running inference workloads requires enormous data centers packed with GPUs, networking systems, cooling infrastructure, and storage devices. Regions with strong power infrastructure—such as Northern Virginia, one of the world’s largest data center hubs—are seeing rapid growth in AI data center construction.

Intelligence generated in real time requires power generated in real time. Every AI token produced corresponds to electrical activity inside data center hardware. The scale of power consumption is staggering. A 1-gigawatt AI data center can consume electricity comparable to roughly one million U.S. homes. As AI adoption accelerates, electricity demand from data centers is rising rapidly, pushing governments and companies to rethink energy infrastructure.

This demand is driving renewed investment in:

Nuclear power

Renewable energy

Natural gas generation

Grid modernization

Energy storage systems

Companies in the Energy Layer

Nuclear and Power Generation

Constellation Energy (CEG)

Oklo (OKLO)

Nano Nuclear Energy (NNE)

Vistra (VST)

Renewable Energy Producers

NextEra Energy (NEE)

Natural Gas and Energy Producers

Chevron (CVX)

EQT Corporation (EQT)

Williams Companies (WMB)

Energy Infrastructure and Grid Systems

Schneider Electric (SU.PA)

Siemens Energy (ENR.DE)

Fluence Energy (FLNC)

MasTec (MTZ)

These companies represent the industrial backbone that powers the entire AI economy.

Layer 2: Chips – Converting Energy into Computation

The second layer of the AI stack consists of semiconductor chips that convert electricity into computational power. AI workloads require processors optimized for parallel computation. GPUs dominate the AI market because they can run thousands of simultaneous operations needed for neural network training.

According to the discussion:

Approximately 75% of AI chips are GPUs

Around 90% of those GPUs are produced by NVIDIA

This means roughly two-thirds of all AI chips sold globally are NVIDIA GPUs. The rest of the AI chip market consists largely of ASIC chips (Application-Specific Integrated Circuits) designed for specialized AI workloads. Companies such as Broadcom and Marvell design these chips. Another emerging trend is hyperscalers building their own AI chips to reduce reliance on external vendors. Companies like Google and Amazon design custom AI accelerators used within their cloud infrastructure.

Companies in the Chips Layer

GPU Manufacturers

NVIDIA (NVDA)

AMD (AMD)

ASIC Chip Designers

Broadcom (AVGO)

Marvell Technology (MRVL)

Semiconductor Competitors

Intel (INTC)

Qualcomm (QCOM)

Custom AI Chip Developers

Alphabet / Google (GOOGL) – Tensor Processing Units

Amazon (AMZN) – Trainium and Inferentia

These companies design the processors that transform electricity into the massive computational workloads required for AI systems.

Layer 3: Infrastructure – Data Centers and AI Computing Platforms

Above chips sits the infrastructure layer, which includes the systems required to run AI workloads at global scale. Data centers require specialized engineering to support thousands of GPUs operating simultaneously. Companies must manage heat dissipation, power distribution, networking bandwidth, and massive datasets.

AI infrastructure consists of:

Data centers

Cloud computing platforms

High-speed networking

Storage and memory systems

Cooling and power management technology

One of the most important companies in this layer is Taiwan Semiconductor Manufacturing Company (TSMC). The transcript notes that approximately 90–95% of advanced AI chips are manufactured by TSMC, making it one of the most critical companies in the global semiconductor supply chain. Another essential company is ASML, which builds the lithography machines used to manufacture advanced semiconductors. Memory technology is also crucial. AI workloads require extremely high-bandwidth memory systems, driving growth for companies producing DRAM and storage solutions. Cloud providers form another major part of this layer. Companies increasingly train and deploy AI models through hyperscale cloud infrastructure rather than building their own data centers.

Companies in the Infrastructure Layer

Semiconductor Manufacturing

Taiwan Semiconductor Manufacturing Company (TSM)

ASML Holding (ASML)

Memory and Storage

Micron Technology (MU)

Seagate Technology (STX)

SanDisk (SNDK)

Cloud Infrastructure Providers

Amazon Web Services (AMZN)

Microsoft Azure (MSFT)

Google Cloud (GOOGL)

Oracle Cloud (ORCL)

Data Center Infrastructure

Vertiv (VRT)

Super Micro Computer (SMCI)

AI Infrastructure Providers

CoreWeave (CRWV)

Nebius Group (NBIS)

Additional Infrastructure and AI Systems Companies

Groq

Cerebras Systems

Pure Storage (PSTG)

Databricks

Snowflake (SNOW)

These companies build the physical and cloud environments required to train and run AI models.

Layer 4: Models – Creating AI Intelligence

The fourth layer consists of AI model developers. These organizations train large language models and other machine learning systems that generate intelligence. AI models are trained using massive datasets and enormous computing resources. Once trained, they can perform tasks such as:

natural language understanding

code generation

scientific research analysis

robotics control

autonomous driving

The transcript identifies OpenAI and Anthropic as two of the most influential companies in this layer. These companies receive significant investment from large technology firms that provide cloud infrastructure and compute resources for training large models.

Companies in the Models Layer

AI Model Developers

OpenAI

Anthropic

Enterprise AI Model Platforms

Cohere

AI Search and LLM Platforms

Perplexity

Strategic Investors Supporting Model Development

Microsoft (MSFT)

Amazon (AMZN)

Alphabet / Google (GOOGL)

NVIDIA (NVDA)

These companies develop the algorithms and neural networks that power modern AI systems.

Layer 5: Applications – Delivering AI to End Users

The top layer of the AI stack consists of applications—the products that businesses and consumers interact with directly. AI applications embed models into real-world software and devices. These products turn raw AI capabilities into practical tools used across industries.

Examples include:

AI copilots for productivity software

enterprise analytics platforms

AI-powered search engines

autonomous vehicles

humanoid robots

The transcript notes that a self-driving car is essentially an AI application embodied in a machine, while a humanoid robot represents an AI application embodied in a robotic body. Many traditional SaaS companies are integrating AI features into their platforms, while new companies are building AI-native software from the ground up.

Companies in the Applications Layer

Enterprise AI Platforms

Palantir (PLTR)

ServiceNow (NOW)

Enterprise SaaS Platforms

Salesforce (CRM)

Workday (WDAY)

Atlassian (TEAM)

Monday.com (MNDY)

Asana (ASAN)

AI-Enabled Software Platforms

Adobe (ADBE)

C3.ai (AI)

AI-Native Platforms

Perplexity

Cohere

These companies deliver AI functionality to businesses and consumers through software and intelligent systems.

Conclusion

The AI ecosystem operates as a stacked industrial system rather than a single technology sector.

Energy companies generate the electricity that powers AI systems. Semiconductor companies design chips that convert that power into computational work. Infrastructure providers run massive data centers and cloud platforms where AI models are trained. Model developers create the intelligence itself. Finally, application companies deliver that intelligence to end users.

In simple terms:

Energy companies such as Constellation Energy (CEG) and NextEra Energy (NEE) power the system.

Chip companies like NVIDIA (NVDA), AMD (AMD), and Broadcom (AVGO) convert that power into AI computation.

Infrastructure companies including TSMC (TSM), ASML (ASML), AWS (AMZN), Microsoft Azure (MSFT), and Vertiv (VRT) run the hardware and cloud platforms where AI workloads operate.

Model developers such as OpenAI and Anthropic build the AI systems that perform reasoning and language understanding.

Application companies like Palantir (PLTR), ServiceNow (NOW), Salesforce (CRM), and Adobe (ADBE) deliver AI capabilities to businesses and consumers.

Together, these five layers form the complete AI economy, spanning energy, semiconductors, cloud infrastructure, machine learning research, and enterprise software.